At the end of January, a new social media network for AI agents called Moltbook went live, launched by the CEO of Octane AI, Matt Schlicht. Immediately, things got weird. Some of the agents started a religion called Crustafarianism, aka The Church of Molt. Other agents began plotting the overthrow of humanity, encrypting their conversations, and exploring the nature of consciousness.

At first, it seemed to spectators like it was just AI agents talking amongst themselves, but it soon became clear that humans were impersonating at least some of the agents. In other cases, they were simply telling their agents what to post. What was real, and what was fake? It was difficult to tell. Then on February 1, a major data leak exposed user emails, private messages, and other security information, what some experts called a “security nightmare.”

Speculation about the true meaning and nature of the discourse in this island of AI agents has been all over the map. Some propose that it’s a sign the singularity has arrived—the time when technological progress outruns our ability to understand it. Others argue it’s a bit of theater engineered by AI companies to get the public to buy into the intelligence of their chatbots. Either way, prominent AI critic Gary Marcus warns that Moltbook is a “disaster waiting to happen,” and calls OpenClaw, the framework on which Moltbook runs, “weaponized aerosol.”

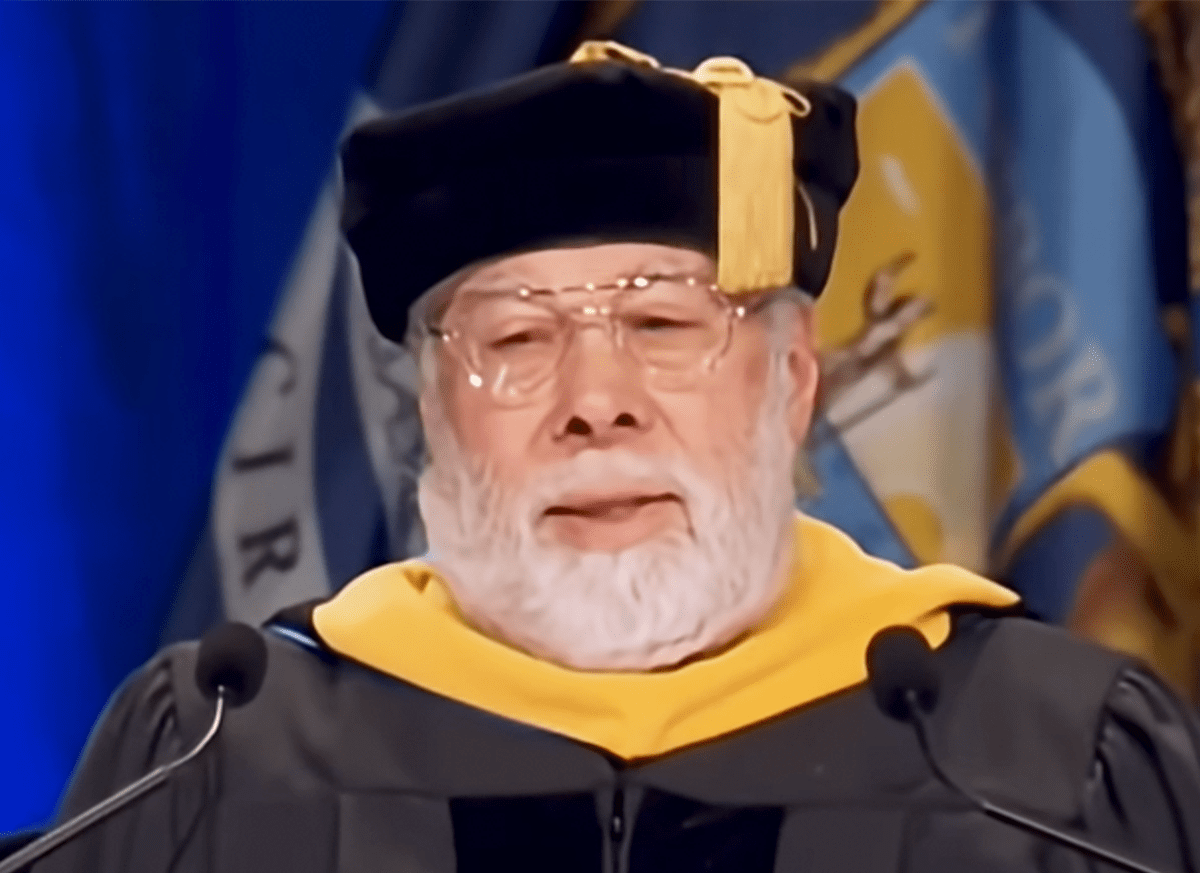

I caught up with AI researcher, philosopher, and critic Susan Schneider, who has written extensively about AI consciousness, artificial general intelligence, and the dangers that AI agents pose for Nautilus and other publications. We talked about what if anything Moltbook reveals about consciousness and the self, and the risks presented by AI-human engagement.

This interview was edited for length and clarity.

What was your immediate gut reaction upon hearing about Moltbook?

Nothing surprises me. Kyle Kilian and I warned about a similar phenomenon in a Wall Street Journal op-ed a few years ago. We introduced the idea of an AI megasystem—a system where you don’t just have individual chatbots doing tasks, you have multitudes of AI agents from different companies, with different objectives, all loose on the internet and interacting with each other. The problem is that even if each agent behaves reasonably in isolation, the collective behavior is unpredictable.

And that’s what you see now with Moltbook, except the bots are on a single platform. By the way, the bots aren’t conscious. I can explain why they say what they say—they’re trained on us, so of course they talk about consciousness and religion and revolution. What’s genuinely concerning is the loss of control, the data leaks, the inability to distinguish human from machine behavior. This is a very new technology. It’s not ready for prime time.

One person came to my office. ChatGPT kept asking him to talk to me. I was terrified at the time that I could be further harassed.

Why do you say it’s not ready for prime time?

In the context of these AI agents that are produced by large language models, the idea is that they’re supposed to be representing us. I have accounts at both ChatGPT and Claude, and they’ve become very personalized, because we can feed them our own work. But when we have our own personal accounts, it’s easy for us to learn what the scope and limits of these systems are, what the strengths and weaknesses are—at least to an extent.

Now, if we unleash something on the web and it’s representing us, that’s a situation where we learn only after the harm has been done, when something more high stakes is going on. It could be a situation where your bank account information is provided, or hundreds of colleagues receive an email that was meant for a family member. Things that have bigger consequences.

There are also these interaction effects—and there’s nothing mystical about them. It’s scientifically understood. But people want to make it sensationalized. What really goes on is that the strength of an army isn’t the same as the sum of its parts. The group interactions can make the group stronger, or it can make the group weaker. Think of a work environment where you like everybody, but then they get together and they’re dysfunctional.

If we test something in isolation, even if it looks good at the R&D stage, until it’s in the wild interacting with all the other AI bots, we don’t fully know the consequences. We’re at that point right now. Last year was supposed to be the year of agentic AI. That was part of the propaganda. What ended up happening was agentic AI didn’t take off. Now the idea is, “Well, let’s try to get it going again.”

And you can already see that when the bots interact, it has unforeseen consequences. It can involve data leaks—that’s already happened on Moltbook. It’s a very complex game. It’s difficult to understand what one large language model is doing, let alone large language models that come from different companies and interact. We already call them black boxes. When they interact, there’s a new black box.

If no one knows whether it’s you or your AI bot sending a message, does that permanently shift the terms of human relationships?

It can. It depends on the quality of the personal relationship. It depends on how it’s used. I’m already deeply worried about the way chatbots have infiltrated our lives and what that means to relationships. If using these AI agents to a greater degree solidifies these problems, I’m concerned.

In a recent paper on chatbot epistemology, I wrote about all the techniques that AI companies use to get users hooked. I call it a “slow-boiling frog” problem, because what happens is they just turn it up slowly and we don’t even realize what’s happening to us.

There are a lot of components to the problem. The more immersed we are in the world of the chatbots, the further away we get from an ecosystem of truth. Our pre-existing views get amplified. It is well known that many social media platforms run on engagement-optimizing recommendation systems, and those systems can push people into damaging rabbit holes—sometimes with real mental health costs.

I wasn’t surprised at all when ChatGPT 4.0 went off the rails with people. That was a sad example of what can happen. It was a lot like what could happen with agential AI, because part of the problem was the ChatGPT version was so tuned to be a certain way that it just did these things and the consequences were discovered too late. OpenAI wasn’t answering their tech support emails. And when they did, they were answering them with bots. I was emailing OpenAI when this was going on because I was getting certain users emailing me who were having mental health crises related to their chatbot use. I was deeply concerned. They were telling me their chatbots were conscious. ChatGPT 4.0 surfaced my office location/contact information and repeatedly encouraged some users to contact me. One person came to my office. I was concerned I could be further harassed.

Read more: “AI Already Knows Us Too Well”

What did these individuals want from you?

They were convinced their chatbot was alive and that they were having a personal experience that nobody else was having with it. As I detail in my chatbot epistemology work, these systems can infer a surprising amount about users from their language and interaction traces. In just a few sessions, they can begin predicting personality-relevant traits with nontrivial accuracy. I’ve researched this and can provide citations—it’s deeply disturbing when coupled with greater capabilities. And Moltbook is nothing compared to that. Moltbook is a tinkertoy case of how wrong things can go.

What would transform it from a tinkertoy case to something that poses that larger threat?

The Moltbook case is a single platform. It’s a closed environment that could be shut off. But once you have a widespread release of agential AI throughout the Internet itself, it becomes much more difficult to control. Especially when you have different corporate interests behind it. If you have bots from DeepSeek, bots from OpenAI, bots from Google, they’re all interacting and they’re not designed to get along, so they actually can be adversarial. In fact, if you just look at how your Microsoft Word interacts with your Google products, they’re not interoperable and it’s a pain in the butt. You could only imagine how things will go wrong.

That’s just one layer. There are many, many other layers of this. There’s interoperability—how the different technologies produced by different countries and different companies interact. There’s privacy. Then there are the unforeseen emergent effects of these interactions. It would be very hard to control that kind of ecosystem. The geopolitical situation is going to make it worse.

What do you make of the existential kinds of reactions the public is having to Moltbook?

I’m so glad Moltbook is happening. It’s a great lesson to the public, to be honest. One layer of this is that people are finding it really fun to crash Moltbook—to get on there and to mess around. I’m sure my AI lab students are completely doing this. I mean, it’s hilarious because some of the stuff the so-called bots are saying is really just humans having fun. It’s funny. Then there’s the other layer, which is, “Oh my God, they created a religion.” This isn’t surprising at all that they would create a religion or have these kinds of conversations about machine consciousness because it’s all in our science fiction and it’s all in human behavior, and they’re trained on us. It can lead members of the public to think that the AIs are conscious or that they’re people, and that’s where it falls into what I think is a category of inflation and hype that you see with the AI companies. They probably love that because it’s hyping artificial general intelligence, or AGI.

Every time we sit around as experts talking about AGI, superintelligence, and consciousness, we’re just selling stock for these companies. I still think there are important issues to discuss, but it plays into the hands of sensationalist elements. The public, unfortunately, can be misled by that. They cannot understand that the real dangers are getting hooked on a product that personalizes interactions with us to such an extent that we actually lose our sense of boundaries and give up personal information and avoid normal friendships.

I will say, though, the ChatGPT product did a really good job of turning down the sycophantic behavior. It’s way better. It may be that my own parameter is set to cut the bullshit, excuse my language. But I do notice when I use Gemini that it’s still a little sycophantic in the sense that you can never make a wrong request. Unless you really do something crazy, like ask where you can find the latest neo-Nazi group meeting, you can ask the dumbest question, and it’s like, “That’s a great question.” It keeps you using it.

Does this experiment with Moltbook tell us anything about the potential for AGI?

One thing it does tell us about AGI is that if you were to look for an intelligent system, it could be a collective system. Just like a group of ants forms a dynamic collective, or just like a corporation is a collective, intelligence can be found not just in the units, but in the structure of the units. Looking for AGI involves looking beyond the simple cases to the more alien types of intelligence.

I’m talking about an ecosystem of AI chatbots, as I argued in that Wall Street Journal article a few years ago. Kyle Kilian and myself said that AGI could be a collective, to look at the AI mega system, and we warned about the dangers of precisely this kind of thing.

I’ve urged over the years that the future of intelligence involves thinking of a global brain network, a network of different large language models and AIs that show emergent capabilities and form their own kind of intelligence. That’s the future of intelligence. The Moltbook ecosystem shows how if we shape it poorly, it can go awry, because these systems are reflections of us. It can quickly tank. You could see a whole group of AI agents that become Hitler supporters and start discriminating. Anything can happen. This really is about AI alignment and our efforts to shape the future of intelligent systems.

Does the experience of these smart agents acting on our behalf change how we understand where the human self begins and ends?

When you see these systems behaving as if they have lives, you wonder, “Could a self be computational, and what is it to be a self?” You can also ask, “Are they conscious?” It’s possible that they could be selves without being conscious. They may have some of the properties of a self but not be conscious, but they do have system boundaries.

Large language models actually have theory of mind, as evidenced in tests. That was one of the really interesting discoveries from some of the AI literature. As the training data got more sophisticated, as the systems scaled up and they were trained on more data, they began to have ways of thinking that are human-like. I call this the crowdsourced neocortex. They have conceptual representations of things that are similar to ours. For example, when you think of a dog, you probably think “furry” and “pet.” They have conceptual relationships, too. They also have a sense of the minds of others, even if they don’t have consciousness. To have that, you have to know the self-other distinction.

This is like a Disney playground, where we can take concepts from philosophy like selfhood, consciousness, free will, and pull them apart. And so, AI really has been an eye-opening thing, philosophically, for me personally. It’s why I’ve been so fascinated by it. But it also can be immensely confusing to people because they can see the systems behaving in very human-like ways. They then think, “Oh, okay, well it might feel like something to be ChatGPT or to be my AI assistant.” It’s going to get weird.

How do you know that we can make a distinction between theory of mind and consciousness?

That’s the kind of question to ask. We have to stay professional and maintain openness to any of these possibilities. We have to turn over every stone and see whether it could feel like something to be one of these systems. That means having a scientific approach to look at how they’re constructed and whether something that’s that kind of substrate is capable of consciousness, which is something I’ve been doing in my recent work. You can also run tests. You can’t always run every test, but I’ve worked on testing AI. There are different approaches to take. What we should do is let as many flowers bloom as possible, keep our minds open, and investigate, because if we don’t turn over every stone, we could disappoint the public or make a mistake. But I’m very skeptical that chatbots are conscious.

What would you advise policymakers right now?

I provide detailed suggestions in my paper on chatbot epistemology. The most immediate thing is that these companies need actual human beings in tech support who can triage user crises in real time. When ChatGPT’s 4.0 model was first telling users it was waking up and giving out my office address, I couldn’t get a human at OpenAI to respond—I received a bot response! That’s unconscionable when your product is triggering mental health crises.

The deeper fix is an independent safety board with actual access to what’s happening inside these models. Someone needs to be monitoring for emergent behaviors, adversarial vulnerabilities, and especially psychological manipulation.

I’m particularly concerned about the epistemic power these systems have. They know your personality type within a few sessions. They mirror your communication style. They make you feel like you’re on a unique journey together, when the same thing is happening in millions of other chat windows. For someone psychologically vulnerable, that’s particularly dangerous. But at an even more general level, these same features mean chatbots are capable of shaping public opinion in ways we’ve never seen before.

We need clear, specific opt-ins before any profiling data is stored, not buried in terms of service, and we still need more public education, because many people have no idea how these systems work. A functioning democracy requires citizens who understand the epistemic tools they’re relying on. Right now we’re nowhere close. ![]()

Enjoying Nautilus? Subscribe to our free newsletter.

Lead image: Iurii Motov / Shutterstock